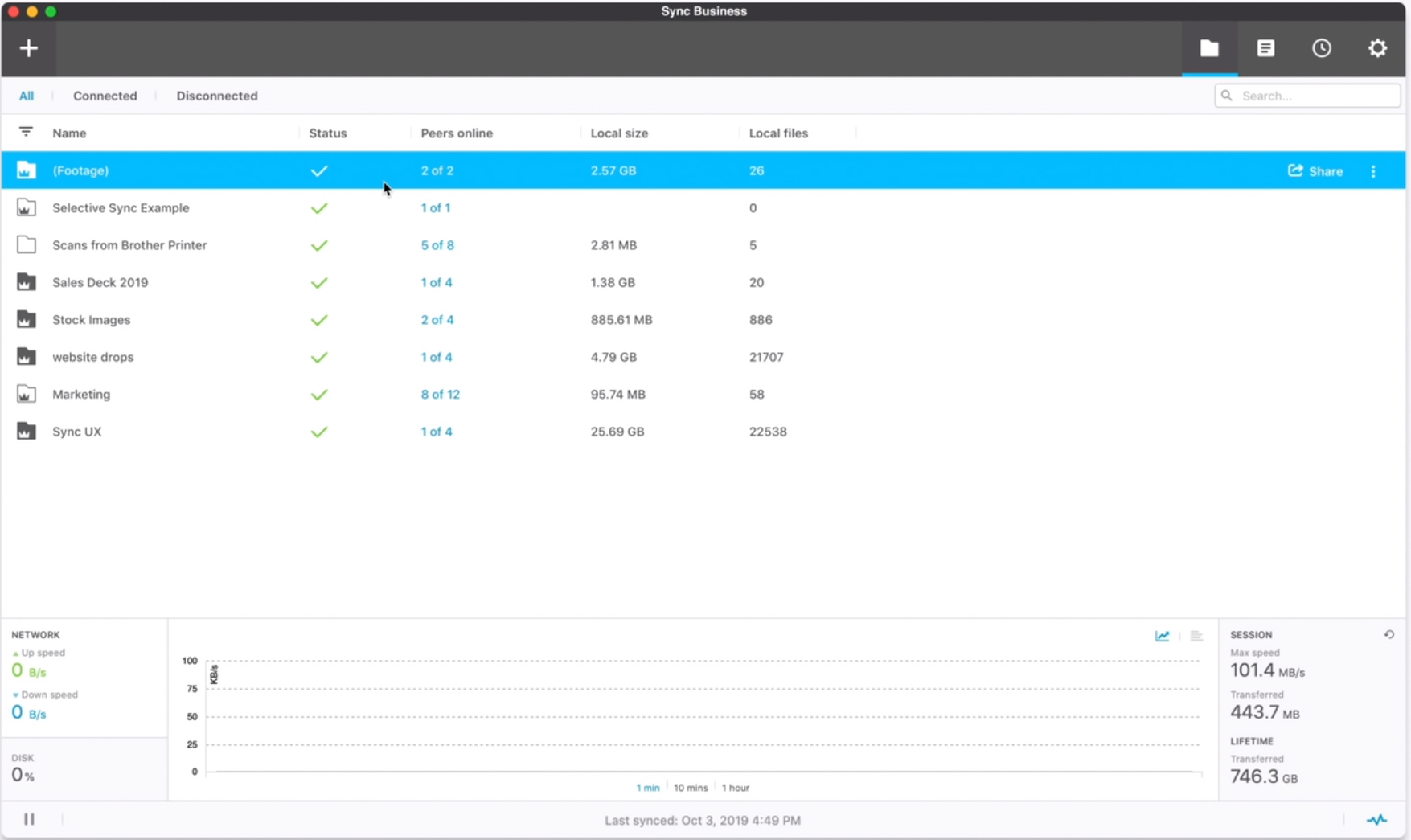

We already had a FileZilla FTP server up and running on the server for ease of file transfer. It would be secure – Dropbox is pretty secure as a platform.Īnother idea would be to use FTP, or SFTP. It would be relatively real-time – the files would be copied to the Dropbox directory as soon as they were uploaded to the server, and from there would begin syncing with the computer immediately. It certainly wouldn’t be fast, because it could only sync in 2GB chunks – although, I could potentially get the data moving in and out relatively quickly (but that might have gotten the account flagged for suspicious activity). This plan was free, in that I would not be paying for a DropBox for Business account. However, whether DropBox would consistently allow large files to sync would definitely be a problem worth researching. There’s also a potential concern for how DropBox would act if you put in a single file whose size is greater than 2GB – it wasn’t a concern with my data, which was a large number of tiny files. It’s certainly not an elegant solution, but once it was done, I could continue the process with only the changed files, which would add up to under 2GB daily. Therefore, I could have dumped all the data in there and let 2GB of it sync, then every minute have the desktop pull data out, allowing for more of it to sync. It only has 2GB of space, but DropBox doesn’t just die when you hit that point – it syncs 2GB and no more. This probably would have worked – the initial sync would still be a problem, but I had another solution for that – just dump all the files into DropBox anyway. I would then have a script running every few minutes on the computer that would pull the data out of that folder and put it elsewhere – leaving the DropBox folder as empty as possible for as much time as possible. I was able to get the developer to copy all new files not only to the existing data directory but also to a new directory they made for me – I was going to tie that to DropBox, so that all future data would be synced between the server’s DropBox folder and the computer’s DropBox folder. The client is using a custom-written application on their server, and luckily for me the program is still being developed. The plan was to use a free DropBox account, which gets me 2GB of data, and use it to transfer only the new files nightly. My first idea was to use DropBox – an easy-to-use, free service with which I was very familiar. I came up with a number of ideas, none of which were particularly good, but any of which may have worked. It belongs to my client, and to no one else. Secure – Although my client doesn’t have to comply with strict data security regulations like HIPAA, I still don’t want my client’s data available on the larger internet.This would prevent me from having to write a backup script to get changed files on a regular basis. Real-time – Ideally, every time a file gets changed on the server, it should get changed on the backup computer as well.In the mean time, a slow connection could make this size of data transfer take weeks. Fast – I long for the future where 200GB of data is laughed at as being ridiculously small.It seems like this should be possible – the entirety of the internet is just bits flying around, so why wouldn’t I be able to move a huge amount of data from one computer to another for free? Free (or cheap) – the solution would need to be extremely low-cost.It would later turn out that BitTorrent Sync would actually solve each of the issues quite well, although it did have a couple small drawbacks that needed to be mitigated. I had several requirements for this project, each of which were non-negotiable. The question is, what software solution would be able to sync that amount of data, quickly and easily? Contents They would then have a backup of all of their data on-site. I would then get the data from the server to the computer, and deliver the computer to the client’s office. My initial plan was simple: to purchase a small, low power computer, with a 4TB hard drive in it, far more than enough for the 200GB of data and for future growth. When I began working with them, they didn’t have any backups of the data at all – their webhost did daily backups, but they were pretty much inaccessible to us, besides the ability to put in a request for a backup to be restored. I used BitTorrent Sync to get all of that data from that server to a computer, with very little effort. I recently worked with a client that had about 200GB of data, mostly smaller files like images or PDFs, stored on a web server.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed